What is MatCo?

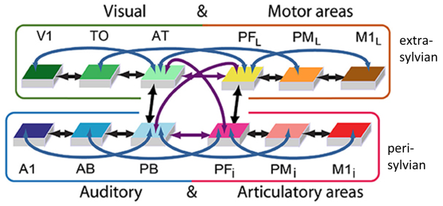

Example of a brain constrained network and its corresponding cortical areas. The network is used for simulating word processing and semantic learning in the human brain. For explanation, please see text below (adapted from Pulvermüller et al., 2021).

Humans have immense linguistic and cognitive capabilities: we acquire tens of thousands of words effortlessly, combine them in multiple ways to form novel constructions and use them for a broad range of communicative functions. In contrast, our closest evolutionary neighbors - non-human primates - typically learn a maximum of 100 signs and exceed this number only with excessive training. Their ability to combine these signs with each other is quite limited and similarly restricted are the roles signs play in their interaction.

The reason for such species-specific differences in cognitive abilities lie most likely in the genetic code, and they are equally likely manifest in features of the biological hardware necessary for language and thought: The many neurons in the human brain, the learning mechanisms governing their plasticity, their local and long-distance connections, their activating and deactivating influence on each other and their joint mechanistic interaction in local clusters, large neuronal circuits, networks, areas and brains.

How such neurostructural and neurofunctional features determine and, in fact, bring about specific cognitive and linguistic functions is the main question addressed in the ERC Advanced Grant project called MatCo: Material Constraints enabling human cognition.

The MatCo project addresses these issues by building neural networks of a specific kind. These brain-constrained networks implement a range of structural and functional features of real brains, so that, for example, specific differences in anatomy of the human and the monkey brains can be directly implemented in the model and the consequence of these differences on network functionality explored. Among the features implemented are local ones addressing neuron function and plasticity, mesoscopic ones addressing connectivity and interaction of local circuits and also macroscopic ones such as area structure, between-area connectivity and large-scale network interaction. Therefore, brain constrained networks realize neurobiological features revealed by anatomical and physiological neuroscience research. These brain-like networks can then be examined in ‘experiments’ probing cognitive functions, which are analog to experiments carried out with human subjects. The resultant observations and their comparison with data from humans may allow for careful conclusions on the neuromechanistic basis of cognition and language.

Example Network

One of the brain-constrained models used in the MatCo project consists of twelve cortical areas. The perisylvian language network is represented by three auditory (primary auditory cortex, auditory belt, modality-general parabelt) and three articulatory areas (primary motor cortex, inferior premotor cortex, multimodal prefrontal motor cortex). In addition to the perisylvian language network, we model several extrasylvian sensorimotor and connector hub areas that have been shown to contribute to language processing: three visual (primary visual cortex, temporo-occipital areas, anterior-temporal areas) and three motor areas (lateral primary motor cortex, premotor cortex, prefrontal cortex).

As can be seen in the diagram on the top left, the areas are connected with each other. Existing anatomical pathways in the brain provide one of the constraints determining network structure. The between-area connections include direct next-neighbor connections (black arrows), links between second-next neighbors or 'jumping links' (blue arrows) and long-distance cortico-cortical connections (purple arrows).

Each of the small black rectangles in the diagram displays neuronal activity in one respective cortical area of the network. Each colored pixel indexes one active artificial ‘neuron’. The entire set of red pixels spread out across several of the areas is the result of the network’s ‘learning’ of one action-related word (such as ‘run’); the blue pixels show the network correlate of an object-related word (such as ‘sun’). Pixels in yellow show neurons partaking in processing both. The network is used to simulate neurobiological mechanisms of word learning and recognition, verbal working memory, conceptual and semantic learning, etc.

Topics of recent MatCo studies and related key publications

- Brain-constrained network modelling and its relationship to related neurocognitive research.

Pulvermüller, F., Tomasello, R., Henningsen-Schomers, M.R. , Wennekers, T. (2021). Biological constraints on neural network models of cognitive function. Nat Rev Neurosci 22, 488–502. https://doi.org/10.1038/s41583-021-00473-5 - Verbal working memory and its mechanistic correlates emerging in the human, but not the monkey brain. Schomers, M. R., Garagnani, M., & Pulvermüller, F. (2017). Neurocomputational Consequences of Evolutionary Connectivity Changes in Perisylvian Language Cortex. Journal of Neuroscience, 37(11), 3045–3055. https://doi.org/10.1523/JNEUROSCI.2693-16.2017

- Semantic binding between words and referent objects and actions emerging in a brain-constrained network model.

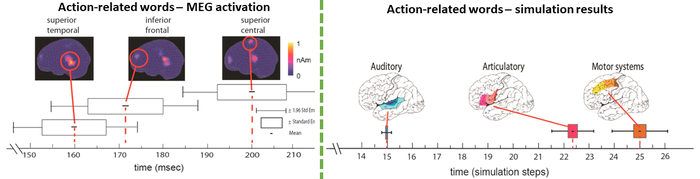

Tomasello, R., Garagnani, M., Wennekers, T., & Pulvermüller, F. (2017). Brain connections of words, perceptions and actions: A neurobiological model of spatio-temporal semantic activation in the human cortex. Neuropsychologia, 98, 111–129. doi:10.1016/j.neuropsychologia.2016.07.004

Tomasello, R., Wennekers, T., Garagnani, M. et al. (2019). Visual cortex recruitment during language processing in blind individuals is explained by Hebbian learning. Sci Rep 9, 357. https://doi.org/10.1038/s41598-019-39864-1 - Processing of concrete and abstract concepts and meanings, with special foci on the concreteness/abstractness difference and the influence of language on the formation of conceptual representations:

Henningsen-Schomers, M.R., Pulvermüller, F. (2022). Modelling concrete and abstract concepts using brain-constrained deep neural networks. Psychological Research, 86(8), 2533-2559. https://doi.org/10.1007/s00426-021-01591-6

Henningsen-Schomers, M.R., Garagnani, M., Pulvermüller, F. (2022). Influence of language on perception and concept formation in a brain-constrained deep neural network model. Philosophical Transactions of the Royal Society B: Biological Sciences. https://doi.org/10.1098/rstb.2021.0373

News

Methods

Funding

The MatCo project has received funding from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation programme (grant agreement No 883811).

Host Institution

MatCo is located in the Brain Language Laboratory of the working group on Neuroscience and Pragmatics, at the Department of Philosophy and Philology, WE4 of the Freie Universität Berlin.

IT Support

Large-scale computational modelling in MatCo is supported by the High-Performance Computer service CURTA, as offered by the Zentraleinrichtung für Datenverarbeitung (ZEDAT) of the Freie Universität Berlin.